20 Jun 2020

Today I would like to talk about Rebus, a simple and lean message bus implementation for .NET. Originally developed by Mogens Heller Grabe and supported by the community, Rebus is robust and works well with a minimum level of configuration, but its main strength is extensibility. With this in mind, Rebus offers many ways to customize things like:

- transport

- subscriptions

- logging

- serialization

- encryption

- and more…

If you want to reead the basics of Rebus, please check the official documentation wiki.

The main thing in Rebus is the concept of Transport. Basically, the transport is the mechanism used to transfer your messages. You can choose from a great list of transport already developed, like InMemory, RabbitMQ or Azure Service Bus, or you can develop your own transport. It depends from you architectural model.

Another main point, necessary in some context like Publish and Subscribe implementation, is the Subscription Storage. Every time that a subscription is added to a specific topic, Rebus needs to keep track of it and finally use that storage to get the list of subscribers and dispatch them the published messages.

In this post, we’ll see how to implement a simple Subscription Storage to store subscriptions on FileSystem.

Extending Rebus: Implements ISubscriptionStorage interface

The first thing we need to do is implement the ISubscriptionStorage interface:

public interface ISubscriptionStorage

{

/// <summary>

/// Gets all destination addresses for the given topic

/// </summary>

Task<string[]> GetSubscriberAddresses(string topic);

/// <summary>

/// Registers the given <paramref name="subscriberAddress"/> as a subscriber of the given topic

/// </summary>

Task RegisterSubscriber(string topic, string subscriberAddress);

/// <summary>

/// Unregisters the given <paramref name="subscriberAddress"/> as a subscriber of the given topic

/// </summary>

Task UnregisterSubscriber(string topic, string subscriberAddress);

/// <summary>

/// Gets whether the subscription storage is centralized and thus supports bypassing the usual subscription request

/// (in a fully distributed architecture, a subscription is established by sending a <see cref="SubscribeRequest"/>

/// to the owner of a given topic, who then remembers the subscriber somehow - if the subscription storage is

/// centralized, the message exchange can be bypassed, and the subscription can be established directly by

/// having the subscriber register itself)

/// </summary>

bool IsCentralized { get; }

}

So, now we proceed by creating the FileSystemSubscriptionStorage that implements the ISubscriptionStorage:

internal class FileSystemSubscriptionStorage : ISubscriptionStorage

{

private readonly string folderPath;

public FileSystemSubscriptionStorage(string folderPath)

{

this.folderPath = folderPath;

}

...

}

We need to know the root folder where subscribers will be stored, so the constructor accept the full path as parameter. Now, the first method will go to implement is RegisterSubscriber:

public Task RegisterSubscriber(string topic, string subscriberAddress)

{

return Task.Run(() =>

{

var topicPath = Path.Combine(folderPath, Hash(topic));

if (!Directory.Exists(topicPath))

{

Directory.CreateDirectory(topicPath);

}

var subscriberAddressFile = Path.Combine(topicPath, Hash(subscriberAddress) + ".subscriber");

if (!File.Exists(subscriberAddressFile))

{

File.WriteAllText(subscriberAddressFile, subscriberAddress);

}

});

}

The RegisterSubscriber method accept two parameters: topic and subscriberAddress. In our implementation, we are going to create a folder for each topic and then a file for each subscriber. Both will be created by using a simple hash, so we can easily get a correct path name avoiding wrong chars.

The file will be a simple text file with the clear subscriberAddress.

The GetSubscriberAddresses method, instead, retrieve the list of subscribers based on input topic. So, we could simply read all files in a folder to get the full list:

public Task<string[]> GetSubscriberAddresses(string topic)

{

return Task.Run(() =>

{

var topicPath = Path.Combine(folderPath, Hash(topic));

if (!Directory.Exists(topicPath))

{

return new string[0];

}

return Directory.GetFiles(topicPath, "*.subscriber").Select(f => File.ReadAllText(f)).ToArray();

});

}

last, but not least, the UnregisterSubscriber will delete the required subscriberAddress from the input topic:

public Task UnregisterSubscriber(string topic, string subscriberAddress)

{

return Task.Run(() =>

{

var topicPath = Path.Combine(folderPath, Hash(topic));

if (!Directory.Exists(topicPath))

{

Directory.CreateDirectory(topicPath);

}

var subscriberAddressFile = Path.Combine(topicPath, Hash(subscriberAddress) + ".subscriber");

if (File.Exists(subscriberAddressFile))

{

File.Delete(subscriberAddressFile);

}

});

}

Using the FileSystemSubscriptionStorage

Following the Configuration API patterns, we’ll develop an extensions method to configure the FileSystemSubscriptionStorage:

public static class FileSystemSubscriptionStorageConfigurationExtensions

{

public static void UseFileSystem(this StandardConfigurer<ISubscriptionStorage> configurer, string folderPath)

{

configurer.Register(context =>

{

return new FileSystemSubscriptionStorage(folderPath);

});

}

}

Then, in the configuration section, we’ll use it in this way:

adapter = new BuiltinHandlerActivator();

Configure.With(adapter)

.Subscriptions(s => s.UseFileSystem(subscriptionsPath))

.Start();

Conclusion

In this post we have explored one way to extend Rebus. Today, a framework with extensibility built in mind is a great starting point. You can use it as is, or you can join the wonderful community and extend it.

Enjoy!

09 Mar 2020

Evolving is a necessary step to survive and the software architecture is not an exception. Also designing a gRPC service means that something may change in the future. So, what happen if we change the ProtoBuf definition?

Evolving a contract definition means that we can add a new field, for example, or remove an existing one. Or we could introduce a new service and deprecate an existing one. And obviusly we’d like that the client still continue to work.

Let’s see what happens.

Break the ProtoBuf definition

We can start with the previously seen .proto file:

// The bookshelf service definition

service BookService {

// Get full list of books

rpc SaveBook (BookRequest) returns (BookReply);

}

// The Book message represents a book instance

message BookRequest {

string title = 1;

string description = 2;

}

// The Book message represents a book instance

message BookReply {

int32 bookId = 1;

string title = 2;

string description = 3;

}

what will happens if we change the message? Let me explore the different ways we can break the contract!

Adding new fields

In the brand new version of our service we need to carry on author information in the BookRequest message. To do that, we add a new message called Author and a new author field:

message BookRequest {

string title = 1;

string description = 2;

Author author = 3;

}

message Author {

string firstName = 1;

string lastName = 2;

}

Adding new fields will not break the contract, so all the previusly generated clients will still work fine! The new fields will simply have their default value. Note that fields are optional by default, but you can declare them mandatory by using the keyword required.

The most important thing is not the field name, but only the field number. Preserve it, don’t change the field types, and your contract will not be broken.

NOTE: The message fields name or their order are not important. Each field in the message definition has a unique field number, used to identify your field in the message binary format. Don’t change it in live environment, it ill break the contract!

Remove a field

We can remove a field from a message? Obviusly we can do it, but all the old clients still continue to send unnecessary data. Note that if a client send an unexpected field, the server will ignore it without throwing exception.

You need to establish a plan to softly replace the property with the new one:

- Introduce the new field int the message contract and leave the old field

- In the next release, introduce a warning when old client still doesn’t send new field

- Finaly, two release after new field introduction, remove the old field and accept value only from the new field

Obviously you could adapt the plan as you wish!

Note that if you want to use a new field name without change its type or order, do it, no one will notice.

Conclusion

Things can change and your gRPC service must evolve. Don’t worry, do it carefully.

Enjoy!

check full code on github

01 Mar 2020

If your answer is Google, you are not wrong. But actually, the gRPC team change the meaning of ‘g’ every release. In short words, ‘g’ stands for:

- 1.0 ‘g’ stands for ‘gRPC’

- 1.1 ‘g’ stands for ‘good’

- 1.2 ‘g’ stands for ‘green’

- 1.3 ‘g’ stands for ‘gentle’

- 1.4 ‘g’ stands for ‘gregarious’

- 1.6 ‘g’ stands for ‘garcia’

- 1.7 ‘g’ stands for ‘gambit’

- 1.8 ‘g’ stands for ‘generous’

- 1.9 ‘g’ stands for ‘glossy’

- 1.10 ‘g’ stands for ‘glamorous’

- 1.11 ‘g’ stands for ‘gorgeous’

- 1.12 ‘g’ stands for ‘glorious’

- 1.13 ‘g’ stands for ‘gloriosa’

- 1.14 ‘g’ stands for ‘gladiolus’

- 1.15 ‘g’ stands for ‘glider’

- 1.16 ‘g’ stands for ‘gao’

- 1.17 ‘g’ stands for ‘gizmo’

- 1.18 ‘g’ stands for ‘goose’

- 1.19 ‘g’ stands for ‘gold’

- 1.20 ‘g’ stands for ‘godric’

- 1.21 ‘g’ stands for ‘gandalf’

- 1.22 ‘g’ stands for ‘gale’

- 1.23 ‘g’ stands for ‘gangnam’

- 1.24 ‘g’ stands for ‘ganges’

- 1.25 ‘g’ stands for ‘game’

- 1.26 ‘g’ stands for ‘gon’

- 1.27 ‘g’ stands for ‘guantao’

- 1.28 ‘g’ stands for ‘galactic’

check full info on github

Enjoy!

11 Jan 2020

In the previous posts on the series about gRPC, we have seen how to build a simple gRPC request/reply service and a gRPC server streaming service by using .NET Core and the new grpc-dotnet, the managed library entirely written in C#. Now it’s the time to create and build a .NET gRPC client. And it’s really easy to do.

First of all, we need to create a client project. For the purpose of this article, a simple console project will be enough. So, you can open the terminal, go to your preferred folder and execute the following command:

dotnet new console -o GrpcClient

Then go to the folder just created and add the necessary reference with the following commands:

dotnet add package Google.Protobuf

dotnet add package Grpc.Net.Client

dotnet add package Grpc.Tools

Now, we can create the bookshelf.proto file (full code available on my github repository:

syntax = "proto3";

option csharp_namespace = "BookshelfService";

package BookshelfService;

// The bookshelf service definition.

service BookService {

// Get full list of books

rpc GetAllBooks (AllBooksRequest) returns (stream AllBooksReply);

// Save a Book

rpc Save (NewBookRequest) returns (NewBookReply);

}

// The request message containing the book's title and description.

message AllBooksRequest {

int32 itemsPerPage = 1;

}

// The request message containing the book's title and description.

message AllBooksReply {

repeated Book Books = 1;

}

message Book {

string title = 1;

string description = 2;

}

// The request message containing the book's title and description.

message NewBookRequest {

string title = 1;

string description = 2;

}

// The response message containing the book id.

message NewBookReply {

string id = 1;

}

We can then add the just created file to the project by using dotnet-grpc CLI. If you haven’t installed yet, execute the following command:

dotnet tool install -g dotnet-grpc

then add the bookshelf.proto to the client project:

dotnet grpc add-file bookshelf.proto --services Client

Finally, be sure to set the right GrpcService value of the Protobuf element in your .csproj file:

You can set the GrpcService attribute to decide the kind of grpc generated code. The accepted values are: Both, Client, Default, None, Server.

<ItemGroup>

<Protobuf Include="..\Protos\bookshelf.proto" GrpcServices="Client" />

</ItemGroup>

Let’s start coding

Calling a Grpc Service is a very simple operation. Just create the channel, connect to the service endpoint, and then pass it to the generated client as a constructor parameter. Now you can use the client instance to invoke the service methods:

using (var channel = GrpcChannel.ForAddress("http://localhost:5000"))

{

var request = new NewBookRequest();

request.Title = "1984";

request.Description = "A George Orwell novel";

var client = new BookService.BookServiceClient(channel);

client.Save(request);

}

NOTE: if you are on macOs, HTTP/2 on TLS is still not supported, so you need to deactivate it by using the following instruction before connect to the service: AppContext.SetSwitch("System.Net.Http.SocketsHttpHandler.Http2UnencryptedSupport", true);

Enjoy!

check full code on github

15 Dec 2019

In the first post of this .NET Core gRPC services, we have seen how to build a simple request-reply service by using .NET Core 3 and the brand new grpc-dotnet library entirely written in C#.

Now, it’s time to extend our scenario by exploring the next kind of service: server streaming.

NOTE: Remember that gRPC offers four kinds of service: request-reply, server streaming, client streaming, and bidirectional streaming. We’ll see the others in dedicated posts

Server Streaming Scenarios

First of all, what is server streaming? This is an excerpt the gRPC site:

Server streaming RPCs where the client sends a request to the server and gets a stream to read a sequence of messages back. The client reads from the returned stream until there are no more messages. gRPC guarantees message ordering within an individual RPC call.

Typically, server streaming may be useful when you have a set of data that needs to be continuously send to the client while the server is still working on that. Let me explain with some example: imagine you need to send back a list of items. Instead of sending a full list, with bad performance, you can send back a block of n items per message, allowing the client start its operations asynchronously. This is a very basic usage of server streaming.

Ok, now we can start coding

Based on the BookshelfService implemented in the previous post and available on my github repository, we must update the bookshelf.proto by adding a new service called GetAllBooks and the related AllBooksRequest and AllBooksReply. That service will return the full list of books from our shelf:

// The bookshelf service definition

service BookService {

// Get full list of books

rpc GetAllBooks (AllBooksRequest) returns (stream AllBooksReply);

}

// The Request message containing specific parameters

message AllBooksRequest {

int32 itemsPerPage = 1;

}

// The Reply message containing the book list

message AllBooksReply {

repeated Book Books = 1;

}

// The Book message represents a book instance

message Book {

string title = 1;

string description = 2;

}

After changing the .proto file, now you’ll be able to override the GetAllBooks method in the BookshelfService class to implement the server-side logic:

public override async Task GetAllBooks(AllBooksRequest request, IServerStreamWriter<AllBooksReply> responseStream, ServerCallContext context)

{

var pageIndex = 0;

while (!context.CancellationToken.IsCancellationRequested)

{

var books = BooksManager.ReadAll(++pageIndex, request.ItemsPerPage);

if (!books.Any())

{

break;

}

var reply = new AllBooksReply();

reply.Books.AddRange(books);

await responseStream.WriteAsync(reply);

}

}

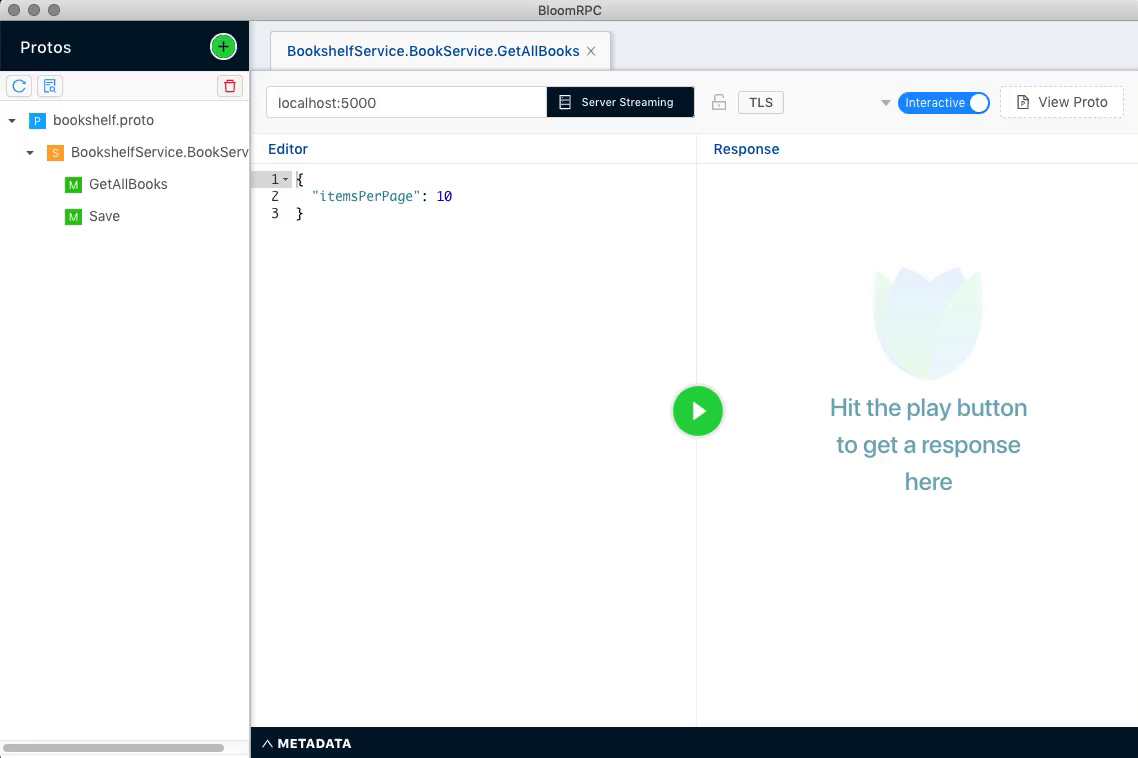

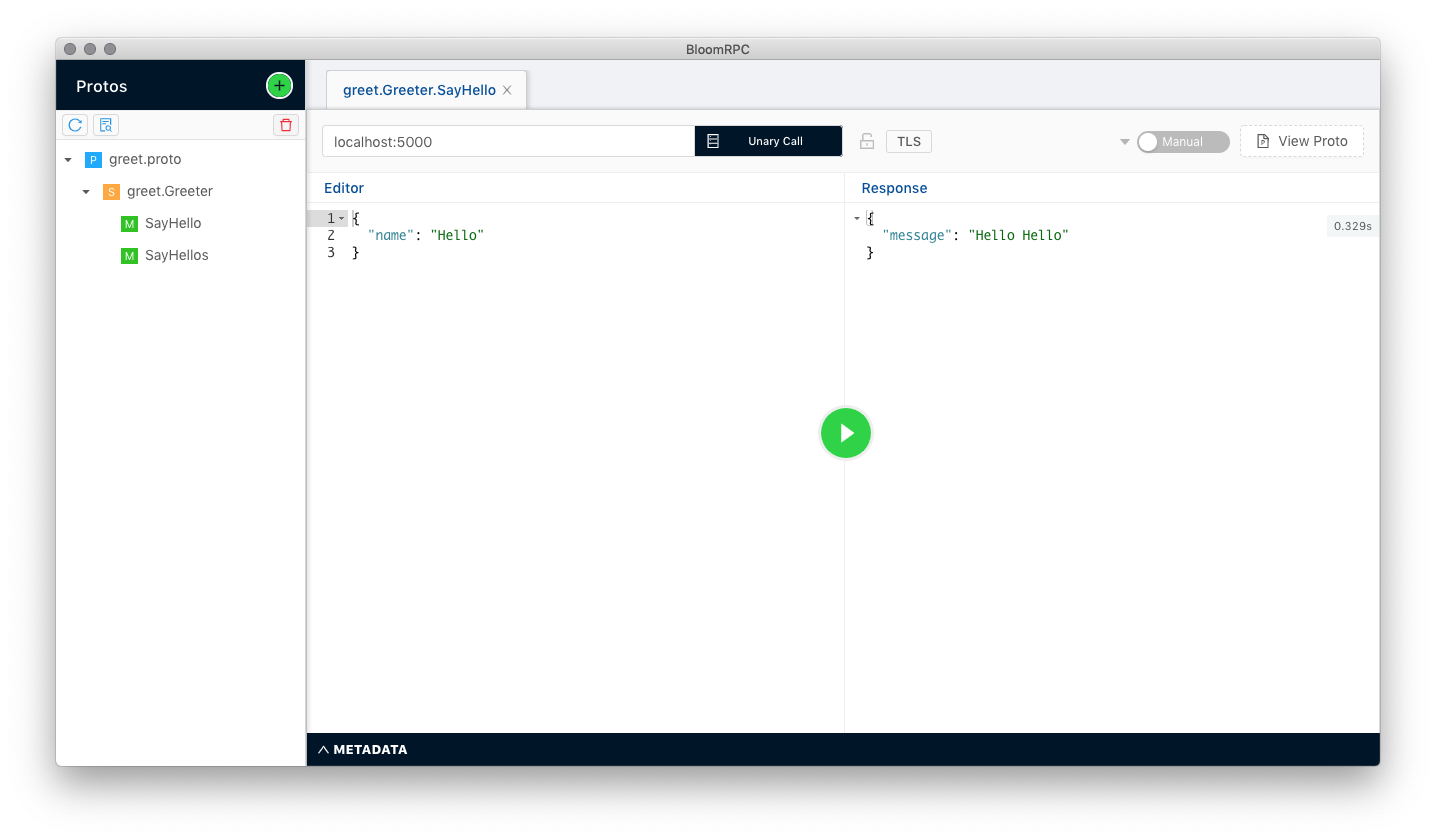

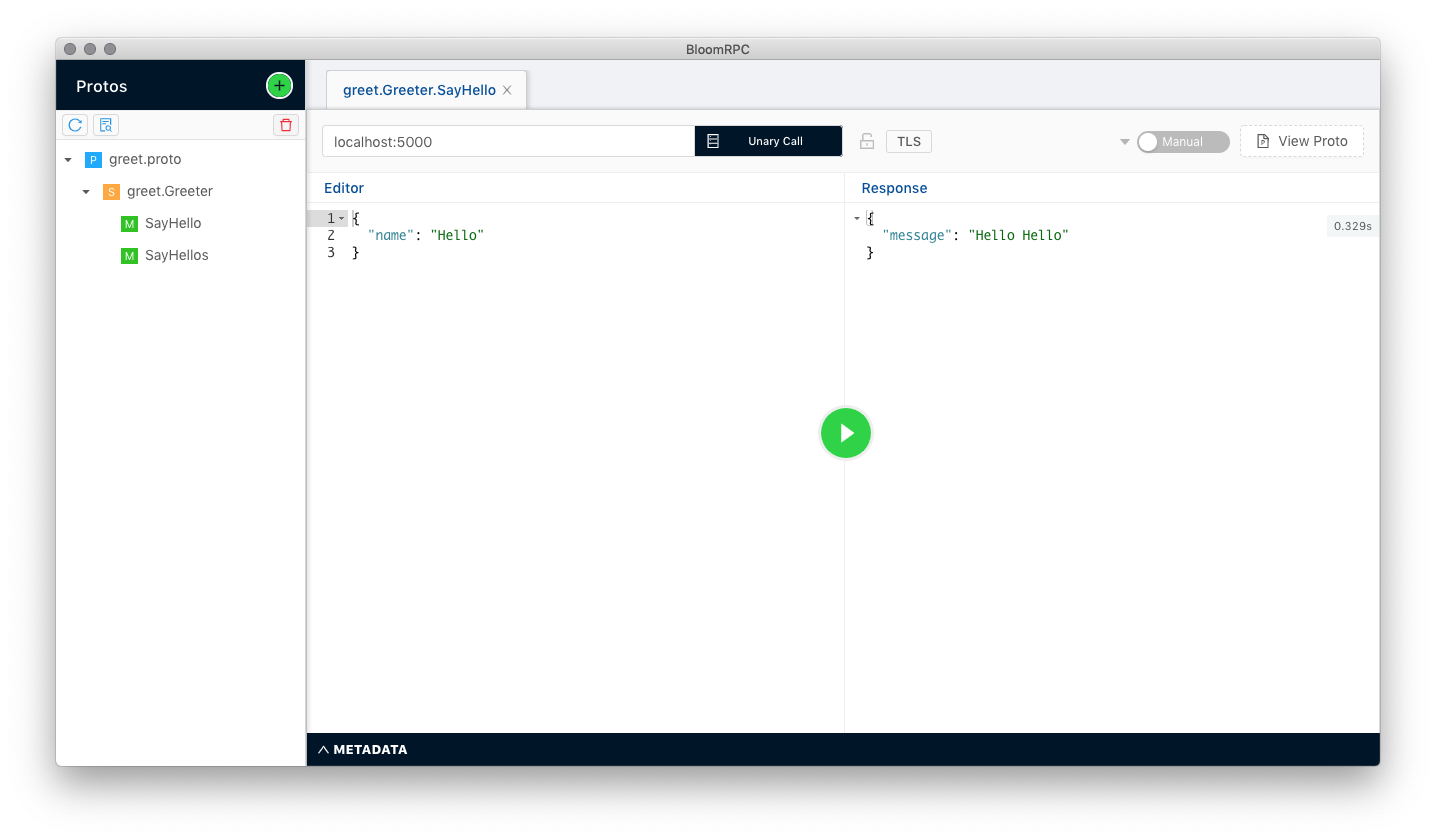

Finally, we can run the service with the dotnet run command and test it with BloomRPC:

In the next post we’ll see how to create the client for the server streaming service type.

Enjoy!

check full code on github

03 Dec 2019

If you are working with .NET Core on MacOS, you’ll probably get the following exception:

System.NotSupportedException: HTTP/2 over TLS is not supported on macOS due to missing ALPN support.

This happens because at the moment Kestrel doesn’t support HTTP/2 with TLS on macOS and older Windows versions such as Windows 7. To work around this issue, you have to disable TLS. Open your Program.cs file and update the CreateHostBuilder with the following lines:

public static IHostBuilder CreateHostBuilder(string[] args) =>

Host.CreateDefaultBuilder(args)

.ConfigureWebHostDefaults(webBuilder =>

{

webBuilder.ConfigureKestrel(options =>

{

options.ListenLocalhost(5000, o => o.Protocols = HttpProtocols.Http2);

});

webBuilder.UseStartup<Startup>();

});

That’s it! Now you can execute dotnet run command to start your gRPC service.

Enjoy!

15 Nov 2019

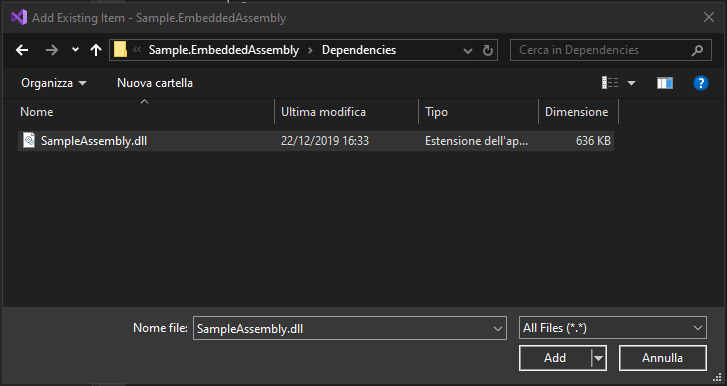

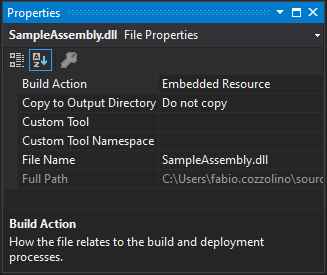

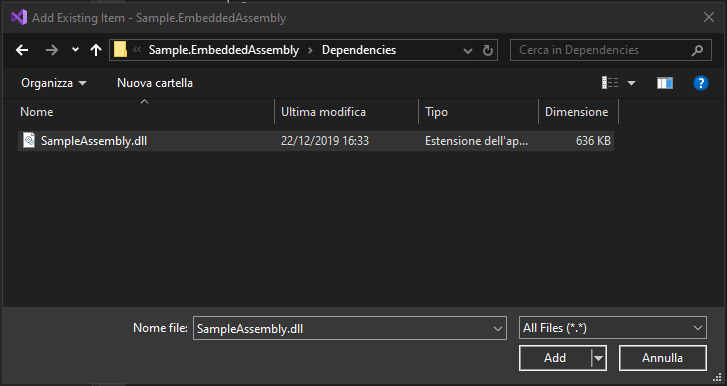

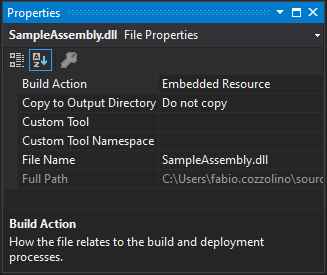

Sometimes, when your assembly has many dependencies, you may have the need to simplify deployment. One useful approach is to embed dependent assembly into the main one. To proceed, you need to add the assembly as embedded resource into your project:

Then set the assembly as Embedded Resource:

Finally, the code is very simple. Subscribe to the AssemblyResolve event. That event is fired when the common language runtime is unable to locate and bind the requested assembly:

AppDomain.CurrentDomain.AssemblyResolve += new ResolveEventHandler(CurrentDomain_AssemblyResolve);

Then, you must implement the event handler, read the assembly from resources and call the Assembly.Load method to load it:

private Assembly CurrentDomain_AssemblyResolve(object sender, ResolveEventArgs args)

{

string assemblyName = args.Name.Split(',').First();

string assemblyFolder = "Resources";

string assemblyEmbeddedName = string.Format("{0}.{1}.{2}.dll",

this.GetType().Namespace, assemblyFolder, assemblyName);

Assembly currentAssembly = Assembly.GetExecutingAssembly();

using (var stream = currentAssembly.GetManifestResourceStream(assemblyEmbeddedName))

{

if (stream == null)

{

return null;

}

var buffer = new byte[(int)stream.Length];

stream.Read(buffer, 0, (int)stream.Length);

return Assembly.Load(buffer);

}

}

The above code assumes that the assembly are stored in a folder named Resources and the base namespace is the same of the current type.

Conclusion

At the end, we can analyze the pros and cons of this approach:

- Pros

- Reduced set of assemblies to deploy is the main reason that could suggest you to embed it in your assembly

- Cons

- Dependent assemblies needs to be manually updated when new release are available

This is a very simple way to read embedded assemblies. Be careful, use this approach only if it is really necessary.

Enjoy!

10 Nov 2019

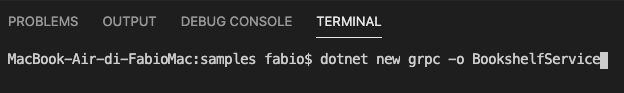

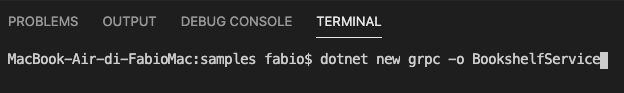

With this post it’s time to get into the first service build with gRPC. First of all, we’ll use the dotnet command to create our solution:

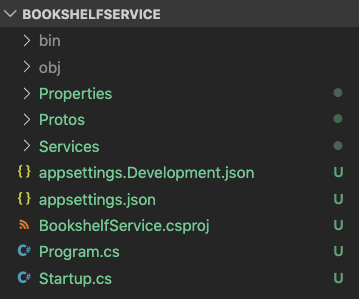

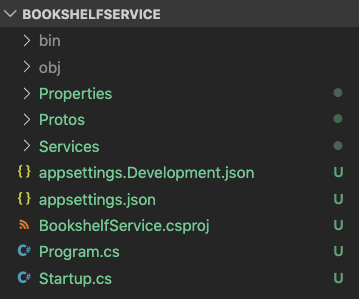

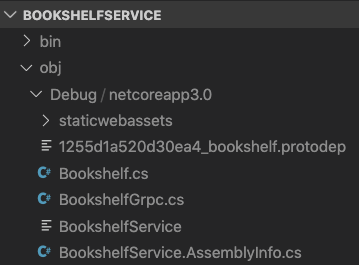

When finished, in Visual Studio Code the project will look like this:

We need to check the project and take a look into these items:

- The

Services\*.cs files: contains the server-side implementation;

- The

Protos\*.proto files: contains the Protocol Buffer file descriptors;

- The

Startup.cs file: register the gRPC service by calling the MapGrpcService method;

Build our BookshelfService

Our first version of BookshelfService implements a simple method that allows saving a Book into our Bookshelf. To proceed, we need to change the default greet.proto by renaming to our brand new bookshelf.proto and changing its content with the following code:

syntax = "proto3";

option csharp_namespace = "BookshelfService";

package BookshelfService;

// The bookshelf service definition.

service BookService {

// Save a Book

rpc Save (NewBookRequest) returns (NewBookReply);

}

// The request message containing the book's title and description.

message NewBookRequest {

string title = 1;

string description = 2;

}

// The response message containing the book id.

message NewBookReply {

string id = 1;

}

Now, we can open the GreeterService.cs and rename the class, and the file, into BookServiceImpl. Something like this:

namespace BookshelfService

{

public class BookServiceImpl : BookService.BookServiceBase

{

private readonly ILogger<BookshelfServiceImpl> _logger;

public BookshelfServiceImpl(ILogger<BookshelfServiceImpl> logger)

{

_logger = logger;

}

public override async Task<NewBookReply> Save(NewBookRequest request, ServerCallContext context)

{

// service implementation

}

}

}

Finally, be sure to change the MapGrpcService into your Startup.cs with this line:

endpoints.MapGrpcService<BookServiceImpl>();

Now, you’re ready to run your first gRPC service.

Wait, what happens? Let’s take a look under the hood

As previously said in this post the .proto file is responsible for the service definition. So, every time you change the .proto content, language-specific tools will generate the related objects. In our case, a set of C# classes. You can describe your service and the related messages by using a meta-language: the proto syntax.

As you can see, we have defined two messages (NewBookRequest and NewBookReply) and a service (BookService). The Protocol Buffer tool will generate the messages as .NET types and the service as an abstract base class. You’ll find the generated source file in the obj folder.

Finally, to implements our service, we only need to extend the BookServiceBase class and overrides the defined methods. For example:

public override Task<NewBookReply> Save(NewBookRequest request, ServerCallContext context)

{

var savedBook = BooksManager.SaveBook(request.Title, request.Description);

return Task.FromResult(new NewBookReply

{

Id = savedBook.BookId

});

}

Conclusion

This is a small example of a simple request/reply service integrated into an ASP.NET Core 3 application. You can test it by using BloomRPC or by creating a .NET client. We will see the second option in the next post.

Enjoy!

check full code on github

04 Nov 2019

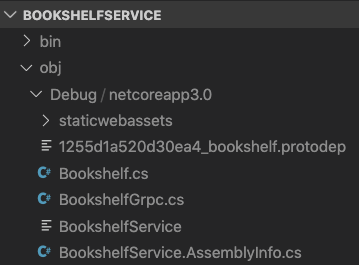

One of the most critical issue in the developer work is the test phase. If you want to test a SOAP service, SoapUI is one of the most well known tools. If you want to test a REST service, you can build your requests by using Fiddler or Postman. But, if you want to test a gRPC service, what you can use?

Searching for a tool, I found BloomRPC. Inspired by Postman and developed with Electron and React, it’s free and open source, works on Windows, Linux and MacOS, and, in my opinion, is one of the most simpler tool for testing gRPC.

The usage is really simple:

- add you

.proto file to the project

- set the endpoint

- fill up your request with sample data

- press play button

In this example I’m runing and testing the Greeter server example from gRPC-dotnet.

You can download and check BloomRPC from GitHub: https://github.com/uw-labs/bloomrpc

Enjoy!

Disclaimer: I’m not affiliated, associated, authorized, endorsed by, or in any way officially connected with the BloomRPC project.

07 Oct 2019

In this post we introduced gRPC and its .NET implementations. In some cases, you’ll need to use a gRPC client from a legacy Win32 process but, since the NuGet package of gRPC C# is based on the x64 C++ native library, it will not work as expected.

Luckly, a solution is really simple:

Before start to build, you need to build the C++ library. You can proceed in two ways:

- follow the steps on building page

- be sure that you have the x86 version of

grpc_csharp_ext.dll in cmake\build\x86\Release (or Debug) folder

To get the libraries, create a new project and add a nuget reference to Grpc package. Then go to packages\Grpc.Core.2.24.0\runtimes\win\native and copy the grpc_csharp_ext.x86.dll library in the previously mentioned cmake\build\x86\Release folder. Finally, be sure to rename the library in grpc_csharp_ext.dll. Now you’ll be able to build from Visual Studio 2019.

Enjoy!